Introduction

Stack and heap are memory regions with different mechanisms for allocating and managing memory resources. Both serve as data storage areas, but their use cases, lifecycles, and functionality vary.

In some programming languages, developers can allocate memory manually. However, whether data resides on the stack or heap often depends on the nature of the data and language or platform constraints.

Learn about stack and heap differences and why they are instrumental for efficient memory management and program execution.

What Is Stack?

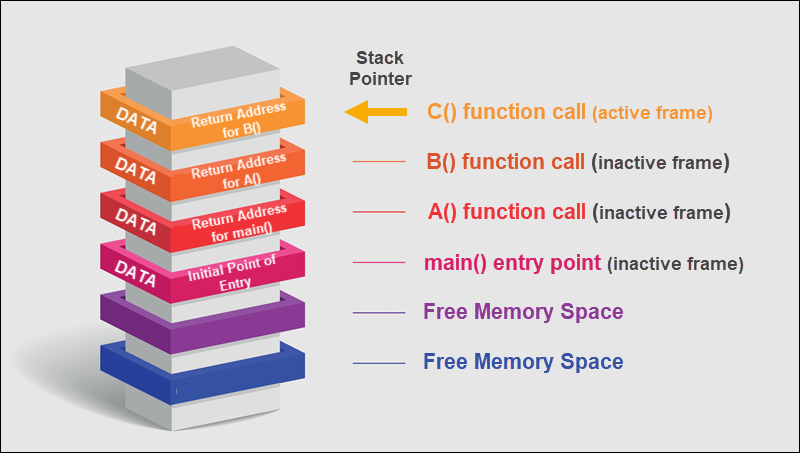

The stack is a specific memory region where computer programs temporarily store data. It represents a continuous block of memory where data resides when functions are invoked and removed when functions are completed.

Stack memory operates on the Last-In-First-Out (LIFO) principle. The most recent item added to the stack is the first item to be removed (popped) from the stack.

When a program is instructed to execute a function via a function call, a new item, a so-called stack frame, is created and pushed onto the stack for that function call. The stack frame contains:

- Local function variables.

- Parameters passed to the function.

- The return address that tells the program where to continue executing once the function completes.

- Other administrative details, such as the base pointer of the previous frame.

When the function finishes execution, the stack frame is popped off, and the system hands over the control to the return address specified in the frame.

Stack Advantages

Utilizing stack memory when executing programs has the following advantages:

- Fast Allocation/Deallocation. Allocating and deallocating memory on the stack is fast and accomplished by simply adjusting the stack pointer value. The stack pointer is moved up to allocate and down to deallocate space (some systems may apply the opposite down/up convention).

- Automatic Memory Management. Memory space on the stack is managed automatically. Space for local variables is automatically allocated when a function is called and deallocated when a function exits.

- No Fragmentation. Memory allocation is sequential and consistent, eliminating memory fragmentation and ensuring the efficient use of free space.

- Quick Data Access. The sequential nature of stack memory allocation generally ensures good cache locality. This results in quicker data access and boosts performance.

- Predictable Lifespan. Variables on the stack exist only for the duration of the function or scope they are in. This predictability makes code easier to write and read.

- Less Overhead. Stack memory allocation has minimal overhead and does not involve intricate algorithms and metadata.

Stack Disadvantages

Stack memory has many advantages, but it also has the following limitations:

- Limited Size. Stack memory is limited in size, and once it is exhausted, it results in a stack overflow, causing the program to crash. This makes the stack unsuitable for storing large amounts of data.

Note: The limited size of the stack is a constraint, but it also acts as a protection mechanism. The system notices a stack overflow and a program terminates. In contrast, a memory leak in the heap might go unnoticed for a long time, potentially until it consumes all available system memory.

- Limited Access. The Last in First Out (LIFO) principle of the stack means that standard operations primarily interact with the top of the stack. Direct random access to other locations in the stack beyond current scope boundaries can lead to errors.

- Variable Lifespan. Variables are automatically deallocated once a function or code block ends, which makes it unsuitable for data that needs to persist across multiple functions.

- No Resizing. The stack does not allow memory block resizing once they are allocated. For instance, if you allocate too little memory for an array on the stack, it cannot be resized like with dynamically allocated memory.

- No Manual Control. While the automatic nature of stack memory can be seen as an advantage, it is a disadvantage when more control over memory allocation and deallocation is required.

What Is Heap?

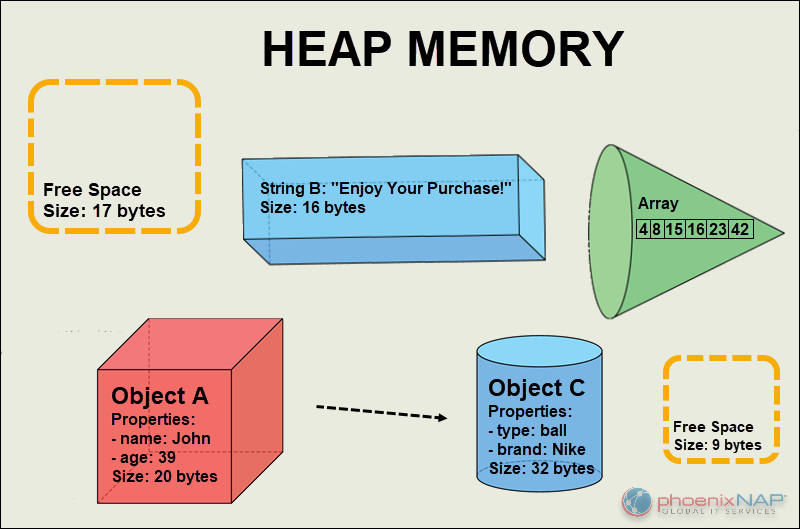

A heap is a region of computer memory used for dynamic memory allocation. In the heap, variables require explicit creation and deletion. For example, C and C++ developers use functions and operators like malloc(), free(), new, or delete to allocate and deallocate memory.

Heap is typically used:

- When the memory requirement for a data structure, such as an array or object, is unknown until runtime.

- To store data that should persist beyond the lifespan of a single function call.

- When there is a possibility that allocated memory might need resizing in the future.

Heap Advantages

Heap memory allocation has several advantages:

- Dynamic Allocation. Programs can allocate memory at runtime based on their needs, resulting in more efficient memory use.

- Variable Lifetime. Objects allocated on the heap persist until they are explicitly deallocated or the program ends. They can outlive the function call that created them, which is especially useful for data that needs to persist across multiple function calls or even for the duration of the program.

- Large Memory Pool. The heap provides a much larger memory pool than the stack. It is suitable for allocating larger data structures or ones that might grow, like arrays or lists.

- Flexibility. Since the heap can grow and shrink within the system's available memory, it is more flexible in handling needs that might change during a program's execution.

- Globally Accessible. Heap memory is globally accessible, meaning it can be accessed and modified by any part of the code and is not bound by the call stack. Sharing data across different parts of a program or even between threads is a clear benefit.

- Reusability. After memory on the heap is deallocated, it can be reused for future allocations, making it a recyclable resource.

- Support for Complex Structures. Heap memory can build and manage complex data structures like trees, graphs, and linked lists, which might require frequent and dynamic memory allocations and deallocations.

Heap Disadvantages

While heap memory offers many advantages, it also comes with its set of disadvantages:

- Hands-on Memory Management. Heap memory requires explicit management. Developers must manually allocate and deallocate memory, which can introduce potential errors and consume additional resources.

- Memory Leaks. If the memory is not deallocated after it serves its function, it may result in memory leaks. This means the program consumes memory, eventually leading to out-of-memory errors, especially in long-running applications.

- Fragmentation. Memory on the heap is allocated and deallocated dynamically. This can lead to scattered, unused memory blocks (external fragmentation) or small wasted spaces within the allocated blocks (internal fragmentation).

- Slower Access. Accessing variables on the heap is generally slower than accessing variables on the stack.

- Dangling Pointers. Pointers referencing deallocated memory locations can become dangling pointers. Accessing or modifying data through such pointers can lead to undefined behavior.

- Concurrency Issues. Accessing and modifying heap memory across multiple threads without proper synchronization can result in corrupt data.

- Potential for Bugs: Due to the manual nature of heap memory management, there is an increased potential for double-free errors, where a developer might attempt to deallocate memory that has already been deallocated.

Note: Always deallocate memory from the heap when it is no longer needed to prevent memory leaks and unpredictable program behavior.

Stack vs. Heap: Differences

The following table highlights the main differences between stack and heap memory:

| FEATURE | STACK | HEAP |

|---|---|---|

| Allocation | The system allocates memory automatically. | A user allocates memory manually. |

| Structure | Memory is allocated in a contiguous block (LIFO approach). | Memory blocks can be allocated and deallocated at random points in time. |

| Access Speed | Faster due to the LIFO structure. | Slower due to manual search and block management. |

| Size Limit | Predetermined and fixed size, limited by the operating system. | Larger and more flexible compared to the stack. |

| Accessibility | Bound by the call stack. | It can be accessed and modified by any part of the code. |

| Usage | Suitable for smaller, short-lived data structures. | Dynamic memory allocation is essential for data whose size will be determined during runtime. |

| Lifetime | Stack memory variables automatically get deallocated when their containing function or block exits. | It lasts until user (or garbage collection, depending on the language) explicitly frees it. |

| Thread Safety | Inherently thread-safe because each thread gets its own stack. | Thread safety needs to be ensured manually. A lack of synchronization can lead to concurrency issues. |

| Flexibility | The size is fixed and set at program startup. It cannot dynamically grow or shrink. | Flexible and can grow or shrink as needed. |

| Fragmentation | Minimal to no fragmentation. | Can suffer from fragmentation and lead to inefficient use of memory over time. |

| Reliability | Less prone to memory leaks. | Can lead to memory leaks or unexpected behavior if not managed adequately. |

Stack vs. Heap: How to Choose?

Choosing between stack and heap memory allocation depends on the application and the scope and lifecycle of the data. This list contains practical advice on when to use stack and heap memory:

Use stack memory when:

- The data is only needed within a particular function or block and has a limited lifespan.

- Working with smaller data structures.

- Data sizes are manageable, and access speed is critical.

- You want to avoid manual memory management.

- Data should be limited to a particular scope, like a single function.

Use heap memory when:

- You need data to persist beyond the function that created it or if its lifetime is complex and cannot be determined at compile time.

- Dealing with larger data structures or when the size is unpredictable at compile time.

- You need more control over memory.

- Data needs to be accessible from multiple functions or scopes.

- Data structures may need to be resized, like arrays that can grow.

Conclusion

You now understand the differences between stack and heap memory, including their strengths, trade-offs, and best-use scenarios.

While it is impossible to universally favor one over the other, learning how each works allows you to manage memory resources more efficiently and better cater to the needs of your application.